Introduction and Overview

This chapter begins with comments on structuring a session and an overview of techniques aimed at cultivating resilience among those seeking help for addictive disorders. The premise is that addiction is a default state that will almost inevitably manifest itself to some degree between sessions. The therapist therefore needs to attend closely to what is happening in the consulting room, but remain alert to the influence on the treatment-seeker of latent cognitive vulnerability factors such as attentional bias, implicit approach tendencies and faulty decision making operating in the coming weeks and days. The next section of the chapter will introduce and evaluate emerging cognitive neuroscience findings aimed at enhancing cognitive control under the following categories:

- cognitive bias and behavioural approach reversal

- neuropharmacological techniques

- cognitive enhancement

- neurorehabilitation (brain training techniques)

- cognitive control techniques.

Following on from this, I shall outline three therapeutic strategies that the addiction therapist can use ‘out of the box’: behavioural experiments, forming implementation intentions and contingency management. These tried and tested therapeutic tactics serve to increase cognitive control in diverse, and sometimes unintended, ways.

Structuring the Session

Following one or more sessions assigned to motivation and engagement, the format for the subsequent sessions adheres to a conventional cognitive therapy structure. The emphasis is on consolidating and maintaining a collaborative stance in the face of what is, at least in part, a treatment-resistant syndrome. While flexibility is important, the typical session would include the following phases.

- Update on developments since previous encounter, with particular emphasis on any expression of addictive behaviour, negative mood states and current concerns.

- Setting the agenda, possibly asking the client to specify the priorities if the list of concerns or problematic issues is extensive. Specifying the stage of CHANGE (one of the Four Ms) of treatment, for example managing impulses, managing mood, maintaining change

- Reviewing any between-session assignment homework

- Introducing and then elaborating on the primary topic of the session, for example coping with impulses

- Negotiating homework for the coming week, for example an implementation strategy or a behavioural experiment

- Summary and feedback

- Schedule next appointment, reinforcing the importance of attendance even if the therapeutic objectives met by the homework are not accomplished.

Building Resilience

The central theme of this text is that addiction emerges and endures because of progressive failures in self-regulation, specifically with regard to impulse control. The aim is to elucidate the mechanisms where possible and to apply any insights gained to improve outcomes. To recap, addictive behaviour acquires momentum due to powerful incentives offered by drugs and gambling. Through repetition and an apparent indemnity against loss of value, addictive behaviours acquire a degree of autonomy that often overwhelms the resolve of the individual to give up. Parallel with this, repeated ingestion of drugs appears to be associated with abnormalities in cognitive control. By analogy, it is surely unfortunate if, while driving, the accelerator jams or is at the very least overreacting to the driver’s right foot. The situation would, however, be immeasurably worse should the brakes begin to fail at the same time. Assume, further, that this mechanical meltdown takes place in just a fraction of a second, before the driver can realize the immediate danger. This, then, is how I see the challenge faced by those attempting to overcome the compulsive habits that characterize addiction. This struggle can take its toll on the commitment of the individual and the resolve of the therapist. It is therefore imperative that the therapist uses every available opportunity to build resilience and emphasize the client’s strengths. The message to the client needs to be nuanced along the lines ‘while you need to be very much on your guard in those situations when you used cocaine that we just discussed, I know that there are also many occasions when you showed greater restraint and coped really well’.

Kuyken et al. (2009, p. 108) suggested that the following questions can used to identify beliefs and attributes linked to resilience. I have modified and paraphrased to match the present context.

- What rules or beliefs can help you be resilient in the face of craving and urges?

- What rules or beliefs should you follow if you are suddenly offered your drug of choice?

- Ideally, what qualities would you like to show when you come face to face with an opportunity to buy [your drug of choice]?

- What beliefs [about you/others/the world] help you display these qualities?

- If you coped in the best way you can imagine, what would you be thinking [about yourself/others/the world]?

Eliciting strengths, associated positive beliefs and anticipatory coping strategies related to oneself and others is of course good practice with a range of psychological disorders. Further, from a cognitive control perspective this refocusing of attention and directly influencing the contents of WM (e.g. the formation of a prospective memory encoded as the implementation intention ‘If I am offered cocaine, then I will politely but firmly say no’). The assumption here is that identifying cognitive control tasks that draw on WM capacity will, if deployed appropriately, disrupt the default goal of drug acquisition facilitated by preferential attentional allocation.

Impulse Control

Impulsivity is a multifaceted construct including processes such as behavioural inhibition, impaired decision making and lapses in attention (de Wit, 2009). Psychometric studies have revealed further attributes of the construct of impulse control. Whiteside et al. (2005) factor analysed widely used measures of impulsivity and extracted four orthogonal factors and the conventional extraversion dimension.

Emerging findings from cognitive neuroscience have provided significant new insights into the area of response inhibition or impulse control. A striking finding is that behaviour, especially highly motivated or well practised responses, can be initiated and to some degree proceed in the absence of conscious awareness. Note that these models (e.g. Dijksterhuis and Aarts, 2010; Strack and Deutsch, 2004) do not propose that behaviour proceeds in the absence of attention, but it can progress in the absence of conscious awareness. This is because the allocation of attention can be dissociated from conscious awareness, specifically at an early stage stimulus appraisal and encoding. Thus, when the cue has appetitive motivational significance its accelerated attentional processing can trigger approach behaviour before controlled cognition can be mobilized to inhibit the approach tendency, assuming restraint is the goal.

Craving and Urge Report

The notion that craving precedes, and therefore drives, addictive behaviour has strong intuitive appeal with both addicted people and often their friends, families and therapists. Thus, while craving should be anticipated and avoided whenever possible, it at least has some utility as a final warning of imminent irresolution. Empirical findings have shown that this is not necessarily the case. Carter and Tiffany (1999) highlighted the inconsistent relationship between the different components of cue reactivity: craving, physiological arousal and behaviour such as actual drug seeking and taking do not cohere closely. First, there is a weak or unreliable relationship between subjective reports of craving and other indices of cue reactivity. A review of 13 studies led Tiffany (1990) to conclude that the relationship between physiological measures and self-reported urges accounted for a modest 15–27% of the variance. Similarly, the relationship between reported strength of urges and actual consumption measures correlated 0.40, approximating to 16% of the variance. Second, craving reports following cue exposure proved to be only modestly predictive of subsequent alcohol use (see, e.g., Litt et al., 2003). Third, and perhaps most intriguing, is the replicated finding that subsets of addicted people do not respond to drug-like cues: 30% of alcoholic participants in some cue-exposure studies failed to report increased urge to drink. Similarly, Powell et al. (1992) reported that 36% of their opiate-addicted cohort reported no increase in craving when shown slides of their favourite drug. To any clinician this is puzzling, as clients’ self-reports attest to a more direct and consistent relationship between cues, motivation and behaviour. Clearly, this relationship holds in the majority of cases, but the question of the apparent inconsistency of the relationship between subjective experience of craving and urgency remains.

Cognitive Processing and Craving

Earlier, I proposed (Ryan, 2002b) that cognitive processes, in effect intervening variables, mediate the stimulus and response. In most cases the cognitive process is facilitative, in keeping with the fact that in many cases drug cues do elicit craving or desire and appetitive behaviour. This facilitative cognitive bias is, of course, central to the theoretical and applied framework described in this text: preferential processing of cues is seen as the driver of the preoccupation commonly reported by addicted individuals. However, inconsistent relationships between craving (the subjective experience of wanting or being urged to consume the drug of choice), physiological arousal (e.g. salivation) and behaviour needs to be understood, as this can lead the client to invalid conclusions, for example ‘I am not craving, so I don’t need to apply my coping skills’ or ‘I can have one drink because I am not really craving and will therefore not lose control’. This is a ‘false negative’, as the absence of craving is interpreted as absence of risk of lapse. Craving is neither necessary nor sufficient to produce drug-acquisitive behaviour. Because, however, it is commonly the only precursor of impulsivity that is available to introspection, it is accorded more credibility than perhaps it deserves. Craving thus provides limited, inconsistent and sometimes misleading insight into the cognitive and behavioural processes involved in addiction.

Field and Cox (2008, p. 14) proposed that a ‘mutually excitatory relationship’ exists between craving and attentional bias, particularly when there is an expectation that drugs are available. It appears that increased attentional focus on drug cues feeds forward into increased craving, which in turn narrows the focus of attention. Moreover, compromised inhibitory mechanisms are taxed by this and unable to disengage attention, or perhaps free capacity to deploy coping strategies acquired in therapy. At face value, this proposition does not appear to add much to existing accounts: a tendency to repeatedly focus on a given cue is invoked to account for the fact that that cue is salient. However, as discussed in Chapter 1 it is the involuntary or automatic nature of the preferential processing that is key. Cues ascribed motivational salience are selected very early in the cognitive processing cycle, before any cognitive control can be orchestrated. In the realm of selective attention only one thing really counts: access to the scarce processing resources that are available to conscious representation and manipulation.

Rapid primary appraisal of drug cues can also elicit psychophysiological arousal and craving that can further increase attentional bias (Franken, 2003; Field, Munafo and Franken, 2009). Referring back to the motoring analogy referred to above, this is the unwanted acceleration that occurs rapidly and before the driver is aware. The second striking finding is the distinctive pattern of neurocognitive deficits that have been detected in the context of substance misuse. At the risk of overstretching the motoring analogy, these go beyond simple brake failure and involve defects in the vehicle’s computerized control system. In Chapter 4 I focused on deficiencies in error monitoring, but broader deficits in executive control have also been noted. It appears that, when attempting to resist drug-seeking impulses, the individual is not only assailed by rapidly detected cues but also impaired in his or her ability to inhibit the resulting cognitive representations and behavioural action tendencies. In the following sections the focus is on understanding impulse control and exploring how any insights gleaned can be used to accentuate existing cognitive-therapy procedures or point the way towards the development of novel therapeutic strategies. Thus, having identified the behavioural and cognitive processes using experimental approaches, the next step is to explore the potential for behavioural and cognitive change.

Cognitive Bias Modification

Fortuitously, laboratory paradigms such as the modified Stroop test and the visual probe that have been used to measure cognitive processing biases can be readily adapted as training procedures in therapeutic contexts, analogous to the manner in which measures of behavioural impulsivity such as the ‘go/no go’ task can be adapted. Using this cognitive bias modification paradigm (CBM), McLeod and colleagues (2002) were the first to modify the visual-probe paradigm from its original purpose as a measure of attentional engagement and disengagement to ‘retrain’ attentional priorities. In the visual-probe paradigm, participants are typically presented briefly with either a negative emotional stimulus (e.g. an angry face) or a benign cue (e.g. a smiling or neutral face) that quickly vanishes to be replaced by a ‘probe’ comprising say, one or two dots. The participant has to indicate via a keyboard whether there is in fact one or two dots, with faster responses revealing where attention was directed (because the participant was directing their attention to that location). Unbeknownst to the participants there is a preselected contingency with some probes linked to negative stimuli in 80–100% of presentations and others linked to positive or neutral images. If the probe replaces the nonthreatening stimulus all, or nearly all, of the time over many trials, attentional biases can be modified.

Using a nonclinical sample, McLeod and colleagues demonstrated that reducing attentional bias through repetitious practice appears to reduce experimentally induced anxiety. Subsequently, this ‘cognitive bias modification’ paradigm was used with emotionally disordered patients (see, e.g., Amir et al., 2009). Initial results appeared encouraging, providing support for the hypothesis that teaching cognitive control skills could yield therapeutic gain, even after relatively brief exposure to the putative therapeutic intervention. A recent meta-analysis (Hallion and Ruscio, 2011) suggests that claims of increased efficacy might be premature, at the very least. These findings were that effect sizes were smaller, for example g = 0.29 for attentional bias reduction following CBM with anxious patients, but statistically significant nonetheless. However, once publication bias (nonpublication of nonsignificant findings, known as the ‘file-drawer’ problem) was controlled for statistically, the degree of clinical gain indexed by lower levels of anxiety and depression appeared insignificant (g = 0.13). In the subset of the 45 studies included that assessed dysphoric symptoms after participants experienced a stressor such as a threatening video or upcoming exam following CBM training, the effect was larger (g = 0.23), albeit falling short of significance. This is consistent with the latency inherent in implicit cognitive biases.

Attentional Bias in the Context of Addiction

There are now several studies suggesting that the degree of attentional bias towards addiction-related stimuli observed during treatment has some predictive power in relation to treatment outcome. Cox et al. (2002), for example, found that some problem drinkers in an inpatient detoxification unit demonstrated an increase in attentional bias during their stay on the unit, as indexed by their performance on the modified Stroop test given at the start of treatment and just before discharge. These drinkers were more likely to be classified as unsuccessful three months hence, having either relapsed or been lost to follow-up. The ‘successful’ cohort, who were less likely to relapse, showed no change in attentional bias, in common with the control group, who were heavy social drinkers recruited from Treatment Unit staff. These findings suggest that patients who became increasingly focused on alcohol cues following detoxification proved to be more vulnerable to relapse. Consistent with these findings, Marissen et al. (2006) found that attentional bias measured by a modified Stroop test at the beginning of inpatient treatment predicted outcome for heroin-addicted recruits, again with a three-month follow-up period specified. These interesting findings did not address the question of whether systematically targeting cognitive biases in order to reverse them could enhance outcome.

More can be deduced about causality, with the added promise of therapeutic gain, by manipulating the degree of cognitive bias and assessing how this impacts on key components of addictive processes such as craving and drug seeking behaviour. To this end, Field et al. (2007) devised an attentional training procedure derived from the visual-probe paradigm designed to increase or decrease attentional bias for alcohol-related stimuli among a sample of 60 heavy social drinkers. Results indicated that it was possible to increase attentional bias, and this was associated with increased craving and desire for alcohol, at least among the majority of participants who recognized the contingency between the probe and the fact that it invariably replaced an alcohol-related image. This subset, manifesting higher levels of craving, did not consume more beer on a subsequent ‘taste test’. Paradoxically, the group trained to shift their attention away from alcohol-related stimuli did show a reduction in attention towards the alcohol-related stimuli but an unpredicted increase in attentional bias for novel alcohol-related stimuli to which participants were subsequently exposed. Clearly, this would be a negative outcome should it occur in a clinical setting, given the findings reviewed above linking increased attentional bias at the beginning of treatment to poorer outcomes. However, the participants of Field et al. did not demonstrate increased attentional bias for alcohol-related stimuli at baseline, possibly due to an artefact of the recruitment procedure that was through media and Internet promotion. Moreover, there was no evidence that the effects of the attentional training procedure generalized to other tasks designed to measure attentional processing, such as the modified Stroop task. This suggests that participants were not acquiring more general cognitive control skills but were just becoming more efficient at the particular experimental task. In any event, the observed changes in attentional processing did not seem to be associated with reductions in subjective craving or subsequent drug-seeking behaviour.

Turning to research with smokers, Waters et al. (2003) recruited a sample of 158 smokers aiming to give up. They found those who again showed greater attentional bias were more susceptible to an early lapse. Thus, 49 who smoked within one week were 129 ms slower to colour name smoking-related words, whereas 73 who remained abstinent showed a 52 ms delay in responding to smoking versus neutral words. While causality cannot be inferred from these findings, it seems plausible to surmise that those who found it harder to ignore evocative smoking cues on the experimental task also have found it harder to distract themselves from smoking cues in naturalistic settings and thus became more susceptible to smoking once again.

The Alcohol Attention-Control Training Programme

The Alcohol Attention-Control Training Programme (AACTP; Fadardi, 2003; Fadardi and Cox, 2009) is a computerized programme designed to help problem drinkers override attentional bias that is deemed to instigate alcohol-seeking behaviour. This is a promising medium for delivering CBM, assuming access to personal computing facilities and a therapist familiar with the concept of the procedure. Having established a baseline of how much attention-grabbing power various alcohol cues have, feedback is given to the participant and personal goals set. Thus, this would involve reducing the degree of attentional bias and agreeing to participate in the practice sessions. After each training session participants are provided with visual feedback underperformance as indexed by the number of errors in the overall degree of attentional bias indicated by an ‘interference score’. Interference is calculated by subtracting the mean reaction time to neutral stimuli from the mean reaction time to alcohol-related stimuli. An example of the procedure, which has varying levels of difficulty or challenge, is that participants would view either an alcoholic or a nonalcoholic beverage surrounded by one of four colours—red, yellow, blue, green. In keeping with the Stroop imperative, the participant is required to name the background or surrounding colour of the nonalcoholic stimulus as quickly and as accurately as possible. Over many repetitions, the participants’ attention is thus directed towards the nonalcoholic image and away from the alcoholic one.

Fadardi and Cox (2009) subsequently evaluated the AACTP in a sample of hazardous drinkers (mean weekly drinking equals 44.6 units) and harmful drinkers (mean weekly drinking equals 71.5 units). Baseline measurements found that the harmful drinkers manifested more attentional bias than the hazardous drinkers. In turn, both of these groups showed higher levels of Stroop interference than a comparison group comprised of social drinkers drinking within recommended levels. The hazardous and harmful drinkers then went on to undergo four sessions of attentional training on a weekly basis. While only the drinkers classified as ‘harmful’ were followed up, they had reduced their attentional bias and alcohol consumption at the end of the four weeks of training, and these reductions were maintained at three-months. In addition, confidence in their ability to control the drinking had increased, as did their readiness to change. Fadardi et al. (2011) describe further work extending the AACTP to opiate-dependent people. Preliminary results suggest those receiving attentional training manifested decreased levels of attentional bias, drug temptations and supplementary drug use (i.e. acquiring and using illicit substances) at two- and six-month follow-up assessments. They also needed fewer doses of methadone, the standard opiate substitution drug.

A recent study in the Netherlands (Schoenmakers et al., 2010) provided more encouraging findings, bolstered by a strong randomized design. Schoenmakers and co-workers recruited 43 abstinent alcohol-dependent clinic attendees and randomly assigned them to CBM or a control condition. Participants had all been detoxified and were engaged in CBT. A modified visual probe task was designed so that the probe always replaced a neutral as opposed to an alcohol-related picture over a series of five training sessions amounting to 2640 responses. Results indicated that the CBM procedure, compared with sham training, facilitated disengagement from alcohol cues but did not appear to impact on engagement or speeded detection. Importantly, the enhanced ability to disengage from cues generalized to new pictures of alcohol-related material. However, there was no post-test difference in self-reported craving between experimental and control groups. Neither was there a significant difference in clinical outcome, indexed by rates of relapse: 25% relapsed in the CBM condition and 21% in the control group, although the former took, on average, 1.25 months longer to relapse. Overall, the study does show that CBM can be used as an adjunct to standard treatments such as CBT and this seems to lead to meaningful changes in cognitive processing and at least short-term therapeutic gain. The clients did not appear to balk at the repetitious nature of the procedure, and were judged by their clinician to make a faster recovery than the control participants. In fact, the CBM group were discharged 28 days earlier on average than those in the control group. While convincing evidence of more manifest change in the parameters of addiction such as craving or ultimate vulnerability to relapse was lacking, the changes that were noted (early discharge and longer latency to relapse) appear clinically relevant.

Modifying Implicit Approach Tendencies

Addiction entails a very high frequency of response with regard to, say, smoking and consuming alcohol. As we have seen, instrumental behaviour becomes highly automated insofar as the necessary actions can take place with little or no conscious awareness. This is underpinned by changes at the neuronal level, whereby habitual behaviour is governed less by frontal or executive neural systems and more by dorsal domains of the striatum (see Robbins and Everitt, 2002, and Chapter 3 of this volume). For the habituated drug user, this transition is likely to be experienced as akin to finding oneself reaching for the drug of choice in a reflexive manner. Wiers et al. (2010) focused on the possibility that these implicit approach tendencies could be reversed through re-training. These researchers recruited 42 individuals designated as hazardous drinkers and randomly assigned them to a condition in which they were trained to either avoid or approach images of alcoholic beverages presented on a computer screen. Approach and avoidance were enacted by instructing the participants to either push (an avoidant response) or pull (an approach response) a lever depending on whether the image was either in ‘landscape’ or ‘portrait’ format. The participants were not told that in the majority of cases the avoid (push lever) response to landscape formats was also rejecting an alcoholic beverage. Conversely, the approach (pull lever) response was in the majority of cases enacting an approach to nonalcoholic beverages. The reason that the association between ‘approach-nonalcohol’ and ‘avoid-alcohol’ was not 100% was that participants would more readily recognize the link between the format, image and response if there was no variation or invalidity in trials. Those in the avoid-alcohol condition, instructed to shun the landscape format and embrace the portrait format, pushed most (90%) of the alcoholic drinks and pulled most (90%) nonalcoholic drinks towards them. Participants in the approach-alcohol condition were offered reverse contingencies, that is, predominantly pulling alcohol towards them and pushing soft drinks away. After the implicit training, participants performed a sham taste test, including beers and soft drinks. Participants in the approach-alcohol condition drank more alcohol than participants in the avoid-alcohol condition. Interestingly, no effect was found on subjective craving.

Demonstrating how rapidly experimental methods can migrate to the clinical arena, Wiers et al. (2011) allocated 214 alcohol-dependent inpatients to either four sessions of this behavioural analogue of CBM or a control training procedure. All patients also received treatment as usual based on one-to-one and group CBT. Participants in the experimental group showed a predicted reversal, from approach bias to avoidant bias, in response to alcohol pictorial stimuli. This was echoed by performance on an Implicit Association Test. The impact on craving (which tended to be low in any event) in response to alcoholic or nonalcoholic beverages presented after experimental and control training was less clear. When followed up one year later, 59% of the control group (63 of 106 participants) had relapsed compared with 46% (50 of 108), a finding that just fell short of statistical significance. An outcome was deemed successful if there was no relapse or a single episode of drinking lasting less than three days that was ended by the patient without further negative consequences. As pointed out by the authors, this binary outcome measure did not lend itself well to exploring the mediation effects of the CBM in what was primarily an experimental study rather than a clinical trial. Nonetheless, this brief augmentation to standard CBT appears to have altered core cognitive and behavioural processes in the desired direction and quite possibly contributed to sustained recovery.

Houben et al. (2011) recruited a sample of heavy-drinking students in the Netherlands. Using a standard procedure known as the go/no go task, the participants were trained to either respond or inhibit a response to an alcohol-related image such as a glass of beer or a control image such as a glass of water. Those trained to inhibit a response to alcohol (the no-go condition) were trained not to press a button when shown the alcohol image and always signalled to respond by pressing a button when shown the glass of water. The control group carried out the converse of this procedure, being trained to respond to the alcohol cue and inhibit a response to the nonalcoholic cue. Following 80 trials, the hypothesis that training in response inhibition would lead to an actual behavioural change, that is, a reduction in drinking, was supported.

Reversing the Bias: Conclusion

The above emerging technologies have the potential for modifying cognitive and behavioural processes associated with appetitive and potentially addictive behaviours. Some of the findings (see, e.g., Field et al., 2007) highlighted problems with generalizability across stimuli and procedures for measuring or modifying cognitive processing, as well as anomalies such as reduced attentional bias with one set of stimuli apparently facilitating processing with alternative but semantically similar stimuli. A more fundamental problem with generalizability is that of drawing inferences from participants recruited from the community to the treatment-seeking, and indeed treatment-needing, population that this book is ultimately written for. Apart from the study by Schoenmakers et al. (2010), participants were not treatment seekers and did not meet the criteria for addictive disorders. Addicted individuals would have had much more intense and frequent exposure to drugs, alcohol and expressions of other compulsions such as gambling. Notwithstanding any direct cognitive deficits linked to neurotoxicity, they will have acquired a significantly higher degree of automaticity across cognitive and behavioural response systems. Insofar as CBM is a retraining procedure, I envisage that more repetitious practice would be required given the extant strength of processing bias that has to be overcome. The two complementary techniques aiming to modify cognitive and behavioural responding using implicit training procedures (i.e. procedures that do not, at face value, necessarily reveal their true purpose, although of course patients will be informed of this) can be adapted to run on computerized or Internet-based applications. They are a promising adjunct to existing approaches and merit more systematic and control evaluation in clinical settings.

Brain Training and Neurocognitive Rehabilitation Approaches

Attentional processing is a core component of cognitive control, requiring effortful suppression of prepotent responses. Plausibly, it is attentional capture, with or without conscious awareness, that ignites the impulsive behaviour that defines addiction. Procedures that enhance cognitive efficiency are therefore likely to improve control over habitual or automatic responses. Neurocognitive rehabilitation techniques, more recently popularized as ‘brain-training’ procedures, thus complement, or perhaps extend, the CBM techniques and behavioural approach–avoidance training discussed above. In practice, brain training aims to improve cognitive function through regular training, usually with computerized tests. This contrasts somewhat with CBM, where procedures are highly specific in terms of the chosen stimuli to which the individual is trained to attend/approach or not attend/avoid, but aims to foster a more generalized improvement in cognitive efficiency. The generalizability of any putative enhancements is therefore crucial. Somewhat disappointingly, some recent reviews suggest that individuals who engage in tasks aimed at improving cognitive efficiency or brain training show strong training effect with regard to the specific task but this does not appear to generalize to other tasks. This is problematic in the addiction context, as there is a potentially infinite variety of cues or combinations of cues that can prime impulsive approach behaviours.

A further challenge that has to be addressed is the durability of any cognitive gains associated with training. In this regard, it is important to emphasize that addiction is a chronic syndrome prone to reversal months or years after restraint is initially established. A ‘quick-fix’ is therefore unlikely to confer enhanced outcomes in the longer term. Owen et al. (2010) concluded on the basis of their review that modest effects have been observed with older individuals and preschool children, but that there was little if any empirical support suggesting that brain training conferred benefits across the wider population. Their own findings supported this when they assigned 11,430 participants recruited online to either a group engaged with training tasks that emphasized reasoning, planning and problem-solving or a group who practised a broader range of tasks aimed at enhancing short-term memory retention and visuospatial functioning. A third group was assigned to a control condition where they were tasked with answering obscure questions from six different categories using online sources. Findings indicated that participants improved significantly, indexed by large effect sizes, on tasks on which they were trained. However, performance on ‘benchmarking’ tests including short-term memory and reasoning improved only marginally over the six week training period, with effect sizes as low as 0.01. Moreover, the control group also improved on these tests, designed to capture generalized improvement in cognitive functioning. The authors concluded that the equivalent small gains observed across experimental and control groups could reflect practice effects because the tests were repeated, the adoption of new task strategies or possibly a combination of the two.

It would appear therefore that there is little to be gained, apart from improving performance on the training task, by normal individuals adopting general brain training. What, however if more focused training of core cognitive competencies is applied, perhaps where performance is compromised by factors such as ageing or indeed exposure to neurotoxic drugs? In the aptly named COGITO study, Schmiedek et al. (2010) examined whether there was a positive transfer subsequent to 100 days of one hour training sessions on tests of perceptual speed, WM and episodic memory with cohorts of 103 older and 101 younger participants. These researchers found reliable positive transfer for both individual tests and cognitive abilities represented as latent factors or constructs such as reasoning and cognitive control. Regarding the latter, for example Chein and Morrison (2010) found that a group of 21 undergraduates randomly assigned to an arduous WM training procedure showed significant improvement on a conventional colour-conflict Stroop task compared with untrained controls. The trained cohort also showed significant comparative gains with reading comprehension. This replicated earlier findings (see, e.g., Oleson et al., 2004; Westerberg and Klingberg, 2007) showing enhanced performance on tasks or core cognitive competencies that were not on the training curriculum. In the latter study, improved performance was detectable for several months following the training. Moreover, these researchers found that enhanced WM performance was mirrored by increased activity in the dorsolateral prefrontal cortex and parietal regions as revealed by fMRI. These findings are consistent, albeit in a rather oblique manner, with one of the key predictions derived from the model in Figure 2.1. Thus, improving executive control or ‘top-down’ processes represents a relatively distinct therapeutic avenue through which the implicit ‘bottom-up’ mechanisms of impulse control can be modulated. This complements therapeutic interventions that target implicit processes more directly, such as CBM (see, e.g., Schoenmakers et al., 2010) and reversing behavioural approach tendencies (see, e.g., Wiers et al., 2010) referred to above. Assuming that cognitive control is impaired, efforts to restore or improve cognitive control should thus prove remedial, especially if any gains are generalizable and lasting. In particular, findings that fostering WM capacity can improve attentional control in a collateral manner (i.e., without directly targeting attentional processing per se) suggest a novel means of overcoming attentional bias, a process that is pivotal in the cycle of addictive behaviour. This also provides the therapist and client with a more flexible range of therapeutic strategies. For example, the client could be encouraged to do things that require the repeated utilization of WM or executive control competencies. This could include a wide range of activities, including reading a novel, doing crossword puzzles, computer gaming or simply engaging in more social interaction. This overlaps with the broader therapeutic inheritance of establishing and pursuing goals, not least because goal maintenance is itself a key component of cognitive control.

Physical exercise

For many clients with substance misuse and addictive behaviour problems, pursuing more ‘intellectual’ endeavours such as reading has not featured strongly in the history. Fortuitously, it appears that cardiovascular fitness training (CFT) can improve cognitive and behavioural functioning due to a putative neurogenerative process. Earlier (p. 69) I noted that exercise was associated with improved BDNF-dependent synaptic plasticity. While much of this work has been with laboratory animals, neuroanatomical evidence from older human participants shows comparable benefits in brain health to those observed in aging animals. In a cross-sectional study of people aged 55–79 (Colcombe et al., 2003), frontal, prefrontal and parietal cortices showed a significantly reduced tendency towards age-related declines in cortical tissue density among those who engaged in significantly less aerobic exercise. Study 2 of Colcombe et al. (2004) consolidated these findings by randomly assigning a group of 29 high-functioning 58–78-year-olds to CFT or a nonaerobic control condition involving stretching and toning. Both conditions required participants to practice three times a week in 40–45 minute sessions over a six-month period. When required to complete a cognitively demanding task involving valid and invalid cues, those who received CFT training and were judged to have increased cardiovascular fitness demonstrated increased activity in areas associated with robust attentional control such as the middle frontal gyrus (MFG) and superior frontal gyrus (SFG). Moreover, there was less activation in areas such as the anterior cingulate cortex (ACC), interpreted by the investigators as evidence of less cognitive conflict and presumably of less effort required in error monitoring and inhibitory control. These findings echoed those found in a parallel cross-sectional study (Colcombe et al., 2004, Study 1), which divided participants according to their level of cardiovascular fitness and associated training. Across both studies, either people who showed pre-existing levels of greater cardiovascular fitness, or those who were trained to acquire such fitness, performed significantly better on the experimental cognitive task.

Returning to the subject of addiction, there is a dearth of studies evaluating the potential benefits of exercise on outcome. However, in one of the few extant randomly controlled studies, Marcus et al. (1999) found that adding vigorous exercise to CBT made a significant contribution to improving outcome in a group of 281 female smokers attempting to give up. It emerged that 11.9% of those who were in the exercise condition were abstinent at 12 months follow-up compared with 5.4% of the control group. An added benefit was less weight gain (3.05 versus 5.40 kg) among the more aerobically active cohort.

Working memory and delay discounting

Excessive discounting of future rewards (delayed reward discounting or DRD) has been observed in a variety of disorders but appears to be emblematic of addiction insofar as it reflects the prioritization of immediate gratification and discounting or devaluing of deferred gratification. The addicted individual will consistently opt for immediate gratification associated with substance use or gambling and appears to devalue the longer-term rewards associated with restraint. It thus reflects a type of impulsive decision making that discounts the value of a reward based on its delay in time. DRD has been observed with a range of addicted populations. Converging, although not necessarily conclusive, evidence implicates DRD as a pre-existing vulnerability factor, possibly of genetic origin, for addictive disorders. In a prospective study, Audrain-McGovern et al. (2009) measured delay discounting in a cohort of 947 American 16–21-year-olds attending high school. They found that the standard deviation (SD = 1.41) increase in baseline delay discounting, measured by questionnaire, resulted in an 11% increase (OR = 1.11, 95% CI = 1.03, 1.23) in the odds of smoking uptake. It thus appeared that a tendency to ‘want it now; not tomorrow’ in a broader developmental context will prove something of a liability when drug-delivered rewards are encountered. Krishnan-Sarin et al. (2007) found that delay discounting predicted failure to give up in a group of treatment-seeking adolescents. MacKillop et al. (2011) conducted a meta-analytic review of DRD studies and concluded that there was sufficient evidence to implicate DRD as a vulnerability factor—and one that remains stable over time—for addiction to drugs and pathological gambling. These reviewers also concluded that drug exposure can itself engender or potentiate DRD, in effect acting as a recursive vulnerability factor for the endurance of patterns of addictive behaviour.

Clinical Implications of Delayed Reward Discounting

Bickel et al. (2011), in a ground-breaking study, focused on the interaction between WM and delay discounting. Bickel and his co-workers recruited 27 stimulant-dependent individuals, measured their propensity to discount delayed rewards and trained 14 of them on a range of WM tasks. Controls carried out comparable cognitive training but were not required to engage core WM processes. Those who engaged in WM training showed a significant decrease in delay discounting when re-evaluated, whereas controls showed no change. This was a highly specific outcome and groups did not differ significantly on any other pre- or post-training measure such as Letter Number Sequencing (Wechsler, 1997). Bickel and colleagues concluded that the findings potentially support a new strategy or intervention by which to decrease the discounting of delayed rewards. However, as this study appears to be the first to demonstrate that neurocognitive training of WM can decrease delay discounting, replication is important. Further, as pointed out by the authors, crucial questions relating to the durability of the reduced delay discounting remain unanswered pending further research.

The above findings validate the heuristic value of dual-processing models of addiction predicated on competing neural systems: an impulsive decision system associated with the acquisition of immediate gratification or reinforcement subserved by the limbic and paralimbic regions, and a more strategic, executive system embodied in the prefrontal cortex concerned with planning and deferred outcomes. Further, neuroimaging data indicates that prefrontal regions such as the dorsolateral prefrontal cortex are involved during WM activation and delay discounting tasks, suggesting that augmenting one core process should facilitate the related one, as in WM training apparently facilitating inhibition of impulsivity in the experiment of Bickel et al.. Thus McClure et al. (2007) found that greater limbic activation was observed when choices between an immediate reward and a delayed reward was required rather than for choices between two delayed rewards. Conversely, similar activity levels were observed in the posterior parietal cortex and the lateral prefrontal cortex regardless of whether the choice was between an immediate and a delayed reward or between two delayed rewards. Accordingly, the finding that augmenting WM functioning can have a measurable impact on delay discounting appears to validate these data as well as pointing the way bridging the gap between the neuroscience laboratory and the addiction clinic. This appears to be the first study demonstrating that neurocognitive training of WM decreases delay discounting. The authors concluded that the results offer evidence of a functional relationship between delay discounting and WM. In the absence of replication, these findings can be little more than indicative, but point to a hitherto unexplored arena for addressing a key component of addiction expressed as ‘short-term gain = (probably) long term pain’. Ultimately, the utility of this procedure will depend on whether neurocognitive training such as this can improve outcomes in addictive disorders.

The approaches to WM training discussed above all focus on what has been termed ‘core training’: it involves repeated practice of demanding WM tasks designed to target core competencies or general WM mechanisms. Chein and Morrison (2010) distinguished core training from ‘strategy training’, for example using mnemonic techniques such as imagery, ‘chunking’—devising a mental story within which the memory items are embedded, or simply rehearsal. Core training, by way of contrast, aims to

- limit the use of domain specific strategies

- minimize automatization

- include tasks or stimuli that span different modalities such as verbal or visual memory

- require maintenance despite interference

- oblige the trainee to conduct rapid encoding and retrieval operations

- adapt to trainees’ varying degrees of proficiency with demanding cognitive workloads.

A different approach derives from the innovative work of Baumeister and his team (e.g. Baumeister et al., 1998), who used the term ‘ego depletion’ to denote the fact that self-control apparently diminishes when repeatedly exercised, analogous to muscle fatigue following exertion. Self-control or willpower is thus seen as a limited resource: an individual will resist on the first, second or subsequent challenge, but eventually succumb to some degree. The analogy of a muscle also raises the possibility of training to increase strength or endurance, leading to an improvement in self-control performance. Muraven (2010) exploited this potential when he evaluated the hypothesis that self-control training would contribute to success in abstaining from smoking. He trained smokers aiming to give up to practice small acts of self-control such as squeezing a bar to the point of discomfort. Results suggested that augmenting self-control skills did indeed appear to facilitate abstinence. One month later, the enhanced self-control group had fared better than controls: 27% of the active ‘self-control’ group were verifiably abstinent from smoking compared with 12% of the controls, who were given a range of tasks that required some effort not particularly involving self-control.

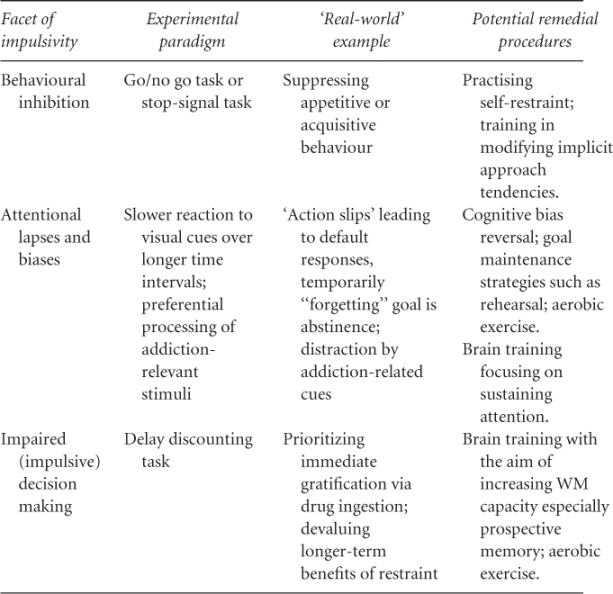

Adopting a more cognitive approach, Jones et al. (2011) induced different mental sets in a cohort of 90 social drinkers by emphasizing either the importance of cautious responding and successful inhibition (Restraint group), the importance of rapid responding (Disinhibition group) or the equal importance of rapid responding and successful inhibition (Control group). Inhibition was measured by a standard measure of impulsivity, a Stop-Signal test. The groups appeared to respond to the experimental mandate, as the Restraint group responded more slowly and more cautiously (making fewer Inhibition Errors) than the Disinhibition group, and performance of the Control group falling in between the two experimental groups. Subsequently, when given the chance to drink beer in a spoof taste test, the Restraint group consumed less beer than the Disinhibition and Control groups, who both consumed similar quantities. The groups did not differ on measures of arousal or mood. Further, the experimental groups (restrained and disinhibited mindset) did not differ in self-reported alcohol craving after completing the stop-signal task, so beer consumption appears to have been unaffected by craving but more susceptible to variations in levels of cognitive control. The researchers concluded that regular practice or exercise of restraint might transfer to regulating intake of alcohol in individual problem drinkers. Table 7.1 outlines how different facets of impulsivity investigated in laboratory studies can generate ‘real-world’ applications aimed at boosting cognitive control.

Table 7.1 ‘Real-world’ analogues of experimental paradigms of impulsivity.

Stay updated, free articles. Join our Telegram channel

Full access? Get Clinical Tree